Notice: this Wiki will be going read only early in 2024 and edits will no longer be possible. Please see: https://gitlab.eclipse.org/eclipsefdn/helpdesk/-/wikis/Wiki-shutdown-plan for the plan.

MaturityAssessmentToolsArchitecture

Contents

Architecture

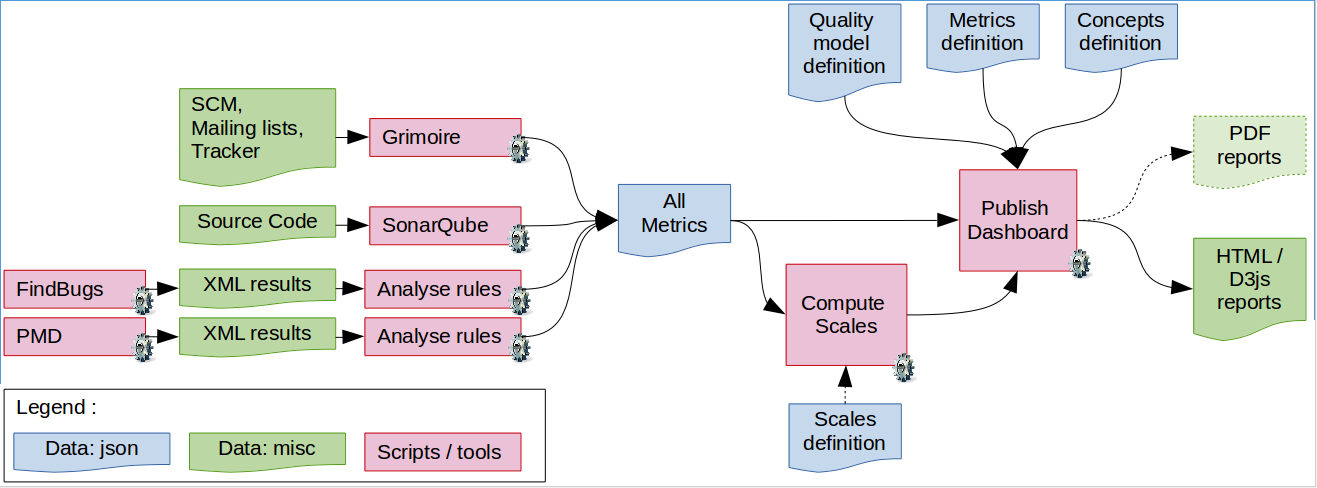

The process has been decomposed in a set of distinct sequential steps, with clearly defined data types between each important operation. The formatting of data acts as both requirements and documentation for the considered system[1]. The overall process is pictured hereafter:

The process flow is detailed as follows:

- Base metrics are provided by the various data providers:

- Bitergia provides metrics for mailing lists, issue tracking systems, SonarQube.

- Custom scripts retrieve information from the marketplace, the PMI and the rule-checking tools.

- The

publish_web.plscript computes scales and aggregates values up to the root quality attributes. The script outputs a json file with normalised values for all nodes. - This data is typically used in turn for d3js visualisation -- or whatever rendering tool that can read json. For more complex computations, one may use this information with knitr to generate LaTeX PDF reports.

- The dashboard is generated from these values, with the associated documentation (read from the /data/ directory).

Files and data formats

The different document formats are examplified in the /PolarsysMaturity/data directory of the repository.

Quality Model

Check /PolarsysMaturity/data/polarsys_qm.json for the full quality model description. The document is organised as a tree structure, with sub-characteristics described in a children element.

{

"name": "PolarSys Quality Model",

"version": "1.0.1",

"children": [

{

"mnemo": "QM_QUALITY",

"type": "attribute",

"active": "true",

"children": [

{

"mnemo": "QM_ECOSYSTEM",

"active": "true",

"type": "attribute",

"children": [

{

"mnemo": "QM_ACTIVITY",

"active": "true",

"type": "attribute",

"children": []

}

]

}

]

}

]

}

Attributes

Check /PolarsysMaturity/data/polarsys_attributes.json for the full list of quality attributes. The document is simply composed of an array of attributes.

{

"name": "PolarSys Attributes",

"version": "1.0.1",

"children": [

{

"name": "Project Quality",

"mnemo": "QM_QUALITY",

"desc": [

"The overall quality of the project. In the context of embedded software, this is often assimilated to Maturity."

]

},

{

"name": "Ecosystem",

"mnemo": "QM_ECOSYSTEM",

"desc" : [

"The degree of maturity for the ecosystem evolving around the project: is it active, diversified, responsive?"

]

}

}

Questions

Check /PolarsysMaturity/data/polarsys_questions.json for the full list of questions. The document is simply composed of an array of measurement concepts.

{

"name": "PolarSys Questions",

"version": "1.0.1",

"children": [

{

"name": "Code complexity",

"mnemo": "CPX",

"desc": [

"The computational complexity of the code: control, data, inheritance."

]

},

{

"name": "Control flow complexity",

"mnemo": "CPX_CF",

"desc": [

"The computational complexity of the control flow structure in the code functions."

]

}

}

Metrics

Check /PolarsysMaturity/data/polarsys_metrics.json for the full list of metrics. The document is simply composed of an array of metrics.

{

"name": "PolarSys Metrics",

"version": "1.0.1",

"children": [

{

"name": "Maximum depth of nesting",

"mnemo": "NEST",

"desc": [

"The maximum depth of nesting counts the highest number of imbricated code (including conditions and loops) in a function. ",

"Deeper nesting threatens understandability of code and induces more test cases to run the different branches."

],

"scale": [1,2,3,4],

"ds": "SonarQube"

}

}

Scripts

A few utility Perl scripts have been written to help develop and use the quality model, concepts and metrics files. These scripts should be enough commented to easily analyse and modify them. A debug option (in the script header) is also available when debugging.

Check quality model

The check_qm.pl script runs some basic checks on the quality model, attributes, concepts and metrics files. Checks mainly revolve around consistency, and also ensure that the mandatory fields are present.

Usage

When executed with the wrong number of arguments, the program displays a short usage:

$ perl check_qm.pl check_qm.pl json_qm json_attributes json_concepts json_metrics Applies various checks to the quality model, concepts and metrics for the PolarSys Maturity task project.

Example of run

boris@castalia $ perl check_qm.pl ../../data/polarsys_qm.json ../../data/polarsys_attributes.json ../../data/polarsys_questions.json ../../data/polarsys_metrics.json # Checking attributes definition file... Number of base metrics: 20. # Checking metrics definition file... [WARN] metric DOPD has no scale. [WARN] metric DOPT has no scale. Number of base metrics: 77. # Checking concepts definition file... Number of concepts: 41. # Checking quality model definition... * Checking node QM_QUALITY * Checking node QM_ECOSYSTEM * Checking node QM_ACTIVITY * Checking node MLS_DEV_ACTIVITY * Checking node MLS_DEV_VOL_1M * Checking node MLS_USR_ACTIVITY * Checking node MLS_USR_VOL_1M * Checking node SCM_ACTIVITY * Checking node SCM_COMMITS_1M * Checking node SCM_COMMITTED_FILES_1M * Checking node QM_DIVERSITY * Checking node MLS_DEV_ACTIVITY * Checking node MLS_DEV_AUTH_1M [ SNIP ] * Checking node NCC_REU_IDX * Checking node ROKR_REU # Checks done.

Describe quality model

The describe_qm.pl script reads the concepts and metrics definition files and outputs human-readable files -- namely html and mediawiki-syntaxed.

This script is not supported anymore, since the publishing process now includes up-to-date documentation of all attributes, questions and metrics. See the generated dashboard for more information: http://dahboard.castalia.camp/

Analyse Rules

This script analyses the XML output of rule-checking tools (namely FindBugs and PMD) and produces a JSON file with all rule-related metrics.

Usage

boris@castalia analyse_rules 08:05:31 $ perl ps_rules_checking.pl ps_rules_checking.pl --pmd-rules=/path/to/pmd_dir --pmd-file=/path/to/xml_file --finbugs-rules=/path/to/findbugs_dir --findbugs-file=/path/to/xml_file --output=mymetrics.json where: -v|--verbose Be verbose. -h|--help Print usage and exit. -pr|--pmd-rules The directory containing JSON PMD rules definition. -pf|--pmd-file The PMD XML results file with violations. -fr|--findbug-rules The directory containing JSON PMD rules definition. -ff|--findbugs-file The FindBugs XML results file with violations. -o|--output The file to write metrics to (JSON). -ov|--output-v Write violations to specified files (optional). This script parses the XML output of PMD and FindBugs and builds a json file with all rule-related metrics for the PolarSys Maturity Assessment process. For more information see https://polarsys.org/wiki/MaturityAssessmentRules

Example of run

boris@castalia analyse_rules 08:05:37 $ perl ps_rules_checking.pl -pr ~/codebase/castalia/projects/pmd/rules/ -pf /media/maisqual/maisqual/my_projects/cdt/cdt_2014-09-06_pmd.xml -fr ~/codebase/castalia/projects/findbugs/rules/ -ff /media/maisqual/maisqual/my_projects/cdt/cdt_2014-09-06_findbugs.xml -o cdt_metrics.json Executing ps_rules_checking.pl on Thu Sep 25 08:06:32 2014. Reading rules file /home/boris/codebase/castalia/projects/pmd/rules//pmd_basic_rules.json.. Rules name: [PMD Basic Rules], version: [5.1.2]. Imported 23 rules from file. Reading rules file /home/boris/codebase/castalia/projects/pmd/rules//pmd_coupling_rules.json.. Rules name: [PMD Coupling Rules], version: [5.1.2]. Imported 5 rules from file. Reading rules file /home/boris/codebase/castalia/projects/pmd/rules//pmd_design_rules.json.. Rules name: [PMD Design Rules], version: [5.1.2]. Imported 54 rules from file. Reading rules file /home/boris/codebase/castalia/projects/pmd/rules//pmd_empty_rules.json.. Rules name: [PMD Empty Rules], version: [5.1.2]. Imported 11 rules from file. Reading rules file /home/boris/codebase/castalia/projects/pmd/rules//pmd_unused_rules.json.. Rules name: [PMD Unused Rules], version: [5.1.2]. Imported 5 rules from file. Reading rules file /home/boris/codebase/castalia/projects/findbugs/rules//findbugs_rules.json.. Rules name: [FindBugs Rules], version: [3.0.0]. Imported 112 rules from file. Imported a total of 210 rules. Reading FindBugs XML file [/media/maisqual/maisqual/my_projects/cdt/cdt_2014-09-06_findbugs.xml].. Reading PMD XML file [/media/maisqual/maisqual/my_projects/cdt/cdt_2014-09-06_findbugs.xml].. Working on category metrics... Writing metrics to file [cdt_metrics.json]...

References

- ↑ It's all about the data. 97 Things Every Software Architect Should Know, pp. 122-123, O'Reilly. ISBN 978-0-596-52269-8.